Last Updated on January 2, 2026 by Sam Gupta

Regardless of how cutting-edge ERP technology might be, your current enterprise data is a major driver of operational efficiency. The efficiency that you will gain (or lose, equally likely) using your newly implemented ERP system. Understanding ERP data re-engineering issues requires mastery of cross-disciplinary processes, information modeling, and multi-system expertise. But most importantly, enterprise alignment of different processes across system boundaries. The unfortunate part about ERP data re-engineering is that even seasoned consultants struggle with it.

The biggest disconnect always is understanding the difference between data and UI/system flows as they are generally intertwined. Also, ERP data re-engineering issues are extremely challenging as they might disrupt legacy processes and transactions. So, unfortunately, no clean slate. Instead, the only option is to find a middle ground, which drives overengineering of your processes and architecture.

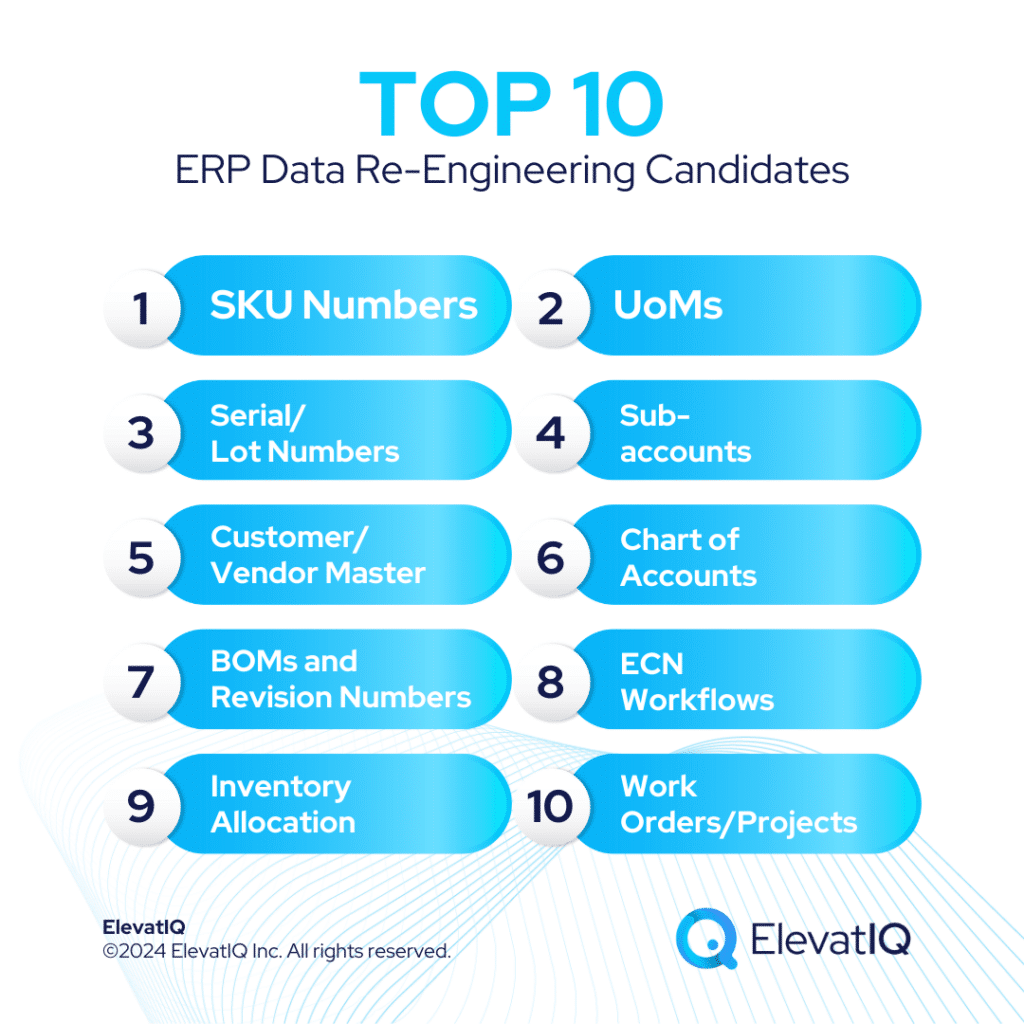

The ERP data re-engineering issues also make implementing ERPs for each business incredibly unique due to their differences. In other words, two exactly similar businesses and operations might yield very different ERP outcomes because of their current ERP data differences. Your data issues might be even more severe if you lived on a poorly architected system (home-grown or commercial) that substantially deviated from the enterprise software data dictionary. That’s probably the reason why moving from QuickBooks or Mainframe to an ERP is more challenging than between two standard ERP packages. The only way to cure them is to have a rollout strategy, requiring careful planning and enterprise-level control (and governance) over several years. The following candidates are the most common culprits, requiring ERP data re-engineering.

10. Work Orders/Projects

Common Issues

- Separate work orders for each routing step. The inability of legacy systems to handle long-standing transactions required segmenting into multiple work orders. But companies still carry them as part of their information model.

- Not combining all steps of a project within it. Adjusting service or engineering costs through GL entries than incorporating them as part of the project workflow. This leads to biased total costs of projects and products/services.

- Not implementing co- and by-products using out-of-the-box workflows. Two issues why companies struggle with them: 1) capabilities not supported with their existing ERP system. 2) Not fully understanding the intricacies of how to implement them properly.

Risks

- Misleading total costs of projects, services, and products. The disconnected information model and cost layers lead to misleading total costs.

- Overengineering of downstream workflows. Companies need to overengineer their downstream processes to resolve issues caused by these over-engineered datasets.

- Increased admin efforts to connect each work order to get consolidated insights. Connecting these individual cost drivers requires substantial admin efforts to keep track of micro profitability and costs.

Solution

- Understand the out-of-the-box data model deeply before implementing it. Don’t rush to implement. Spend time to understand the intricacies of out-of-the-box data models.

- Re-engineer your data model first before implementing it. Re-engineer as much as you can. Do it at the enterprise level, involving every function and not siloed approach.

- Vet the data model of ERP systems before buying them. Compare the data model of newer systems before selecting them. Buy only systems that closely follow enterprise software data dictionaries.

9. Inventory Allocation

Common Issues

- Different allocation states in different systems. Companies that struggle with organizational alignment often end up keeping the allocation algorithm in multiple systems, often leading to frequent departmental confrontations.

- Warehouses and channels don’t have their own inventory for allocation and reconciliation to work. Without a formal strategy for warehouses, locations, and channels, companies often end up mixing them, throwing off the allocation equation.

- Ad-hoc cycle counting processes. Ad-hoc cycle counting processes lead to inventory discrepancies, misbalancing the allocation equation.

- No formal allocation strategy or governance process. Without a formal allocation strategy and governance, the allocation equation very rarely balances for companies, causing substantial inventory issues.

Risks

- Customer experience issues. Examples such as customers not being able to place orders or not having the right amount of inventory when they require it.

- On-time delivery issues. Allocation issues lead to not being able to reserve inventory for the right customer at the right time, leading to on-time delivery issues.

- Fire fighting among departments. Not uncommon for departments to steal inventory or have physical blocking (and not keeping a record of inventory inside the system).

- Substantial inventory planning problems. Even minor discrepancies can lead to substantial consolidated planning issues.

Solution

- Map SKUs per channel and have a dedicated inventory pool per channel. Not only maintain the SKUs per channel but also keep an accurate account of inventory per channel.

- Design enterprise allocation flows. The allocation flows are not possible unless all departments agree on a centralized allocation strategy across enterprise boundaries.

- Implement formal cycle counting processes for each inventory pool and ensure that physical and digital inventory remain close and not too far off.

8. ECN Workflows

Common Issues

- No formal ECN processes. Companies struggle to implement formal ECN processes as that requires aligning product models and a streamlined change process.

- Allowing changes throughout the process without a formal governance plan. This is especially prevalent in industries where a formal product may not exist, such as construction, sign manufacturing, or custom machinery. Their processes are different from product-centric industries, and they believe that the ECN processes might not work for them.

- No formally defined product model, making implementing ECN workflows incredibly challenging.

Risks

- Downstream issues with costing and scheduling. The shortcuts taken for the ECN process will lead to overengineered downstream processes, leading to costing and scheduling challenges.

- Issues with SKU number maintenance. ECN issues might lead to having multiple SKUs for each change or product model not connected, driving substantial part maintenance issues.

- Misleading insights. Not accounting for the costs of changes appropriately will lead to misleading insights.

Solution

- Define the product model. Even if you might feel that you don’t have one. The foundation of ECN relies on a streamlined product model.

- Formalize ECN processes. Build ECN workflows and build consensus with all stakeholders involved throughout the ECN process to ensure compliance.

- Re-engineer legacy processes because of poorly implemented ECN controls. Re-engineer any legacy over-bloated processes due to the poor implementation of ECN prior to implementing a new ERP.

7. BOMs and Revision Numbers

Common Issues

- Revision numbers not utilized. Separate SKUs for each revision. This is a major issue with companies when they might not have a formalized process for maintaining revisions.

- Return implemented as a routing step. This is common with companies that may not have had return capabilities baked as part of their legacy system and had to take a shortcut to implement it. But these companies still carry them as part of their information model.

- Mixing of different hours limits the traceability of different activities. Companies on legacy systems that didn’t support reporting of hours individually or took shortcuts because of the perceived increased effort generally struggle with the traceability of different activities and their cost implications.

Risks

- Costing and scheduling issues with BOMs. The issues with the underlying structure of BOMs and revisions generally lead to substantial costing and scheduling issues.

- Unreliable insights produced despite substantial admin effort reconciling various data silos.

Solution

- Implement BOMs and revision process using the out-of-the-box functionality. Understand the intricacies of out-of-the-box capabilities of BOMs and revision numbers and implement them without hijacking any existing workflows.

- Re-engineer BOMs and revision numbers as much as possible before implementing a new ERP system. Don’t implement them as is. Analyze BOMs and revision numbers and assess if re-engineering them might be possible without disrupting the support for legacy processes and transactions.

- Reduce manual intervention for BOM data entry. The more manual intervention you have in the process, the more data quality issues you are going to have. So replace the entry of BOM and revisions using CAD add-on if possible.

6. Chart of Accounts

Common Issues

- Too verbose. The companies outgrowing accounting or smaller ERP systems generally tend to have flatter structures for charts of accounts due to their limited number of hierarchies and layers.

- Chart of accounts barely mapped with lean hierarchies. The legacy systems might support the mapping of a chart of accounts, but the hierarchies may be leaner, limiting the traceability.

- Reconciling through GL entries. Companies outgrowing smaller systems have a tendency to reconcile account balances using GL entries.

Risks

- Maintenance issues. The verbose chart of accounts would increase the admin efforts in maintaining the flatter hierarchy and reconciling them.

- Challenging to get insights from ERPs. Getting insights may not be possible with the flatter hierarchy as the underlying layers may not be enough for the required traceability.

- Significant financial control issues. The practice of updating GL balances directly may cause substantial financial control issues because of the limited traceability of the original transactions. GL entries should be limited to non-operational ad-hoc transactions and definitely not to reconcile operational accounts.

Solution

- Model chart of accounts after the system-provided chart of accounts. ERP charts of accounts are different from accounting software, and they need to be re-engineered and mapped in appropriate hierarchies to get desired insights.

- Utilize ERP hierarchies than making them verbose. Follow ERP hierarchies as much as possible. Bypassing them may cause traceability issues.

- Avoid direct GL entries for operational transitions. Avoid GL entries for operational accounts as much as possible, especially accounts that might be harder to reconcile, such as inventory or channels.

5. Customer/Vendor Master

Common Issues

- Customer/vendor master model mixed with another master data business object. Companies end up mixing the master data models, such as implementing 3-tier hierarchies of the customer master using a dropdown on a product maintenance screen.

- Inconsistent modeling of child and parent business objects. Mixing child objects with parents, such as implementing ShipTos as customers or vice versa.

- Three-tiered hierarchies are not maintained in the system. 3-tier hierarchies, such as buying groups or holding companies, require implementing them using out-of-the-box capabilities. Instead, they take shortcuts such as making them 2-tier and then overengineering processes to get insights.

- Retail customers modeled as orders. Making decisions such as bundling all retail transactions under one customer account causes substantial performance and maintenance issues.

Risks

- Slowed customer service. Overly bloated business objects cause performance issues, increasing the total time required to serve customers.

- Overengineered workflows. Overengineered workflows may require further over-engineering to overcome the shortcomings of underlying workflows.

- Scalability issues. Without understanding the implications of mixing technologies, issues such as using EDI for integrating internal customer channels may lead to scalability issues of codes, causing issues with onboarding new customers.

Solution

- Maintain the natural hierarchy of business objects. Don’t deviate from the real-world hierarchy of the customers and re-engineer it if possible to align with the selected ERP system.

- Have a master data governance plan in place. Implement master data governance plan and implement them using workflow technologies if possible to reduce the number of variations with inputs touching customer master.

- Limit the creation of customers/vendors to power users. Limit the number of users adding records impacting master data.

4. Sub-accounts

Common Issues

- Implementing too many unnecessary sub-accounts. Companies with a limited understanding of sub-accounts might end up using too many of them, adding unnecessary steps with each transaction.

- Not modeling the right dimensions with the sub-accounts to get the desired traceability. Not implementing sub-accounts where they are fit leads to uncontrolled growth of chart of accounts or overengineered processes.

- Implementing sub-accounts as a chart of accounts. Implementing sub-accounts as the chart of accounts causes charts to be too verbose.

Risks

- Slowed processes because of unnecessary data entry at each step. The unnecessary work just to enter data required for sub-accounts may increase additional steps with each process and may slow down operations.

- Lost traceability. Not modeling sub-accounts where they would be appropriate would lead to the lost traceability of transactions.

- Bloated chart of accounts. The verbose chart of accounts causes sustainability issues in the long term.

Solution

- Thoroughly analyze sub-accounts. Think 10x before deciding to implement sub-accounts. They can break your entire implementation and are much harder to reverse.

- Don’t implement too many sub-accounts if they are not really part of the operational workflow. Unless absolutely required by operational workflows, don’t implement them.

- Implement sub-accounts in the FP&A software if possible. The FP&A software provides much more flexibility in adding more dimensions without disrupting operational workflow.

3. Serial/Lot Numbers

Common Issues

- Using project numbers or dates as serial numbers. Companies often mimic serial or lot numbers using random numbers such as project# or dates and then would be required to build the entire workflow to support serial or lot number processing that would have been available out-of-the-box with the system.

- Mixing of serial or lot numbers. Companies that don’t fully understand the lot and serial numbers end up mixing the schemes, causing traceability issues and increased admin efforts.

- Not implementing lot numbers appropriately. Some companies that may not fully appreciate the out-of-the-box lot control functionality end up hijacking processes and overengineering workflows.

Risks

- Traceability issues with serial and lot numbers. The biggest implication would be traceability as you build workflows that are dependent upon serial and lot numbers.

- Hijacked processes lead to further over-engineering. The ERP processes are so interdependent and intertwined that once you hijack one process, the dependent processes would start breaking and would require further hijacking.

Solution

- Use the serial numbers model as provided by the system. Understand the workflow fully. Buy a system that has serial number capabilities aligned with your processes.

- Don’t hijack out-of-the-box workflows to accommodate broken processes. Stop the urge to hijack processes. Instead, invest effort in understanding the intricacies of the out-of-the-box processes.

2. UoMs

Common Issues

- UoMs don’t mimic the natural hierarchy of data. Companies that don’t use a natural hierarchy compliant with sales, purchase, and production processes often struggle with substantial planning or operational issues. An example, such as implementing a product that is generally sold in rolls as EA would cause downstream issues.

- UoMs modeled as dropdown options or form fields as opposed to coding at the SKU level. Companies that don’t fully understand the difference between the data and UI flows end up implementing UoM issues as dropdown values.

- Purchase, production, or consumption UoMs not modeled appropriately. Companies that don’t fully understand the implications end up opting out from modeling each of these categories, causing issues with the downstream processes.

Risks with

- Over-customization of workflows to fix the issues caused by data. Unnecessary hierarchies of data often drive substantial overengineering of downstream processes and operational inefficiencies.

- Business performance issues such as MRP etc. Not following the natural hierarchies of UoM will lead to requiring substantial admin efforts with MRP suggestions before they are meaningful for business operations. It also leads to lost trust in system data.

Solution

- Follow the natural hierarchy of UoMs. Follow the natural hierarchies of UoM. Trace every single transaction and vendor and identify the UoMs on how materials are being sold, procured, or consumed.

- Fix UoM issues first before implementing a new system. Re-engineer UoMs prior to selecting and implementing a new system. Not aligning UoMs will lead to substantial planning issues even with the new system.

1. SKU Numbers

Common Issues

- Flat SKU numbers without hierarchy. Modeling revision numbers as SKUs. Implementing each UoM as individual SKUs. Flattening the variable or dimensional inventory. These are examples of where companies flatten their inventory.

- Too much intelligence built into SKU numbers. Companies on legacy SKUs that they can’t retire might have substantial intelligence built as part of the numbers or in the description, which leads to scalability and maintenance issues.

- Mixing of automated and manual numbering schemes. Inconsistent numbering scheme. Using an outside number generator to generate SKUs without native controls built up might lead to maintenance issues with inventory.

Risks

- Difficulty in extracting SKU-level insights. The intelligence built as part of SKUs or flattening them might make extracting SKU-level insights challenging and would require substantial admin efforts to reconcile each SKU-parts to extract insights.

- Challenging to plan at the SKU level. The planning cycle assumes getting reliable data at the SKU level. Poor modeling of SKUs poses challenges in extracting reliable data at the SKU level, which is the foundation of most planning cycles done at the SKU level.

- Misleading business decisions. Flattening of SKUs and the inventory model being all over the place lead to misleading business decisions.

Solution

- Don’t build too much intelligence with SKUs. Be especially careful with any intelligence embedded as part of the SKUs or descriptions.

- Use the automated numbering scheme. The one that is natively built with the system.

- Clean the SKUs before implementing a new system. Try to re-engineer the SKUs as much as possible before implementing a new system. And if ERP data re-engineering is not possible, try to come up with a rollout plan.

Final Words

Unfortunately, there is no way to get rid of ERP data re-engineering issues due to the need to support legacy processes and transactions. But not re-engineering them prior to implementing an ERP can lead to overengineered processes and overly bloated systems, sometimes leading to overall operational efficiency loss despite your investments.

So if you are thinking of replacing a new ERP, invest some time thinking through how you plan to re-engineer your current data. You also need to find a system (or combination of systems) that’s closer to your current information model. But don’t stop there, as ERP data re-engineering would require a roll-out plan spanning over years if you want to be even closer to getting rid of all of your ERP data issues.